Table of Contents

- What Is LLMO (Large Language Model Optimization)?

- Why LLMO Matters in 2026

- LLMO vs. SEO vs. GEO vs. AEO: A Clear Comparison

- How Large Language Models Discover and Select Content

- The AI-fy TRIAD Framework: Three Pillars of AI Visibility

- Pillar 1: Structure (To Be Found)

- Pillar 2: Content (To Be Cited)

- Pillar 3: Authority (To Be Recommended)

- The Complete LLMO Implementation Checklist

- How to Measure LLMO Success

- Common LLMO Mistakes (and How to Avoid Them)

- LLMO Timeline: When to Expect Results

- Frequently Asked Questions About LLMO

- Next Steps: Your AI Visibility Roadmap

Section 1

What Is LLMO (Large Language Model Optimization)?

When a potential customer asks ChatGPT "What's the best consulting firm for supply chain optimization in Germany?" or prompts Perplexity with "Who should I hire for brand strategy in Amsterdam?", the AI does not show a list of ten blue links. It synthesizes a direct answer, often recommending one to three businesses by name.

The question is: will that answer include your business?

If you have not optimized for large language models, the answer is almost certainly no. Your website might rank on page one of Google and still be completely invisible to AI.

LLMO solves this problem. It combines technical infrastructure (can AI crawlers access your site?), content architecture (can AI extract clean answers from your pages?), and entity authority (does AI trust you enough to recommend you?) into a systematic optimization practice.

The term "LLMO" is the most technically precise description of this discipline. It refers specifically to the large language models (GPT-4, Gemini, Claude, LLaMA) that power modern AI assistants and AI search. Other terms you may encounter, such as GEO (Generative Engine Optimization) and AEO (Answer Engine Optimization), address overlapping but narrower aspects of the same challenge. We will map these distinctions clearly in the next section.

The core premise of LLMO is simple: SEO is about being found. LLMO is about being the answer.

Section 2

Why LLMO Matters in 2026

The shift from link-based search to AI-generated answers accelerated dramatically between 2024 and 2026. Several data points illustrate the magnitude of this change.

ChatGPT passed 600 million monthly active users in early 2025, and that number has continued to grow. Google's Gemini surpassed 350 million monthly active users. Perplexity, Claude, and Microsoft Copilot each serve millions more. These are not novelty tools anymore. For many professionals and consumers, conversational AI has become the first stop for research, comparison, and purchasing decisions.

Meanwhile, traditional search is evolving from within. Google's AI Overviews now appear for a significant share of queries, providing synthesized answers directly in the search results. The result is a sharp decline in click-through rates to individual websites, even for pages that rank in the top three positions. Research from Ahrefs documented a 34% drop in traditional organic clicks attributed to AI-generated snippets replacing the need to visit source pages.

Traffic from generative AI sources to retail sites increased by 1,300% between late 2024 and late 2025, according to Adobe's research. The trajectory has not slowed.

For business owners and founders, the implication is clear: your customers are asking AI for recommendations. If AI does not know who you are, it cannot recommend you. And unlike traditional search, where ranking improvements happen gradually, AI recommendations create a compounding advantage. Once a model associates your brand with a topic, that association tends to persist and strengthen over time.

Early adoption of LLMO is not optional. It is a structural advantage.

Section 3

LLMO vs. SEO vs. GEO vs. AEO: A Clear Comparison

The proliferation of acronyms (LLMO, GEO, AEO, AIO, GAIO, SGO) has created genuine confusion in the industry. Here is a clear breakdown of what each term means and how they relate.

| Discipline | Full Name | Primary Goal | Optimizes For | Key Metric |

|---|---|---|---|---|

| SEO | Search Engine Optimization | Rank higher in search results | Google, Bing (SERP rankings) | Rankings, CTR, organic traffic |

| AEO | Answer Engine Optimization | Be the direct answer | Featured snippets, voice search, PAA | Snippet appearance rate |

| GEO | Generative Engine Optimization | Be cited in AI summaries | AI Overviews, ChatGPT, Perplexity | Citation count, answer share |

| LLMO | Large Language Model Optimization | Be understood, trusted, and recommended by AI | All LLM surfaces (chat, search, embedded AI) | Recommendation frequency, entity recognition, sentiment |

How These Disciplines Layer Together

SEO remains the foundation. Clean site architecture, quality content, and domain authority still feed directly into how AI systems discover and trust your content. Without a crawlable, well-structured website, no amount of AI optimization will help.

AEO builds on SEO by formatting content for direct answer extraction. This includes writing concise answer paragraphs, implementing FAQ schema, and structuring headings as natural language questions. AEO excels at zero-click search surfaces and voice assistants.

GEO extends the strategy to AI-generated summaries and multi-source synthesis. It emphasizes depth, fact density, authoritative sourcing, and presence on platforms that AI training pipelines reference (such as Wikipedia, Reddit, and major publications).

LLMO is the broadest and most technically precise layer. It encompasses both AEO and GEO while adding machine-readability, entity clarity, knowledge graph integration, and the technical infrastructure (robots.txt, llms.txt, schema markup) that allows AI to fully understand who you are, what you do, and why you are credible. LLMO asks not just "Will AI cite my page?" but "Does AI understand my business as a complete entity?"

Section 4

How Large Language Models Discover and Select Content

Pathway 1: Training Data

Every major LLM is trained on massive datasets that include web pages, books, academic papers, and publicly available text. During training, the model builds internal representations of entities, relationships, and knowledge. If your business was well-represented in the training data (through consistent naming, high-quality content, and authoritative sourcing), the model develops a baseline "awareness" of your brand.

This pathway is slow. Training data updates happen on cycles measured in months, not days. You cannot directly control what gets included. But you can influence it by ensuring your content is present on high-authority, frequently crawled sources and by maintaining consistent entity information across the web.

Pathway 2: Retrieval Augmented Generation (RAG)

Modern AI systems do not rely solely on training data. When ChatGPT, Perplexity, or Google's AI Overviews generate an answer, they often perform real-time web searches, retrieve relevant pages, and synthesize responses from fresh content. This process is called Retrieval Augmented Generation (RAG).

RAG is where LLMO has the most immediate impact. When an AI system searches for information to answer a user query, it breaks the question into sub-queries, retrieves top-ranking pages for each, and then selects passages that best answer the question. Your content needs to rank for these sub-queries (which is where SEO still matters) and be formatted in a way that the AI can extract clean, authoritative passages (which is where LLMO content optimization becomes critical).

What Determines Which Content Gets Selected?

Across both pathways, AI systems evaluate content on several dimensions:

| Factor | What the AI Evaluates | LLMO Action |

|---|---|---|

| Accessibility | Can the AI crawler access and read the page? | Configure robots.txt, use SSR, create llms.txt |

| Clarity | Is the answer clearly stated and easy to extract? | Answer-first paragraphs (40 to 60 words) |

| Authority | Is this source credible and verifiable? | Schema markup, E-E-A-T signals, knowledge graph |

| Freshness | Is the content recent and actively maintained? | Visible timestamps, quarterly content reviews |

| Fact density | Does the content include verifiable data points? | Statistics, citations, original research |

| Entity clarity | Is it clear who wrote this and for what business? | Consistent naming, author bios, sameAs links |

Research from a Princeton/Georgia Tech study presented at KDD 2024 validated that content enriched with named sources, expert perspectives, and specific data points is measurably more likely to be cited by AI engines. The study also found that effectiveness varies by niche: business content benefits most from named expert quotes, while technology content benefits from authoritative citations.

Section 5

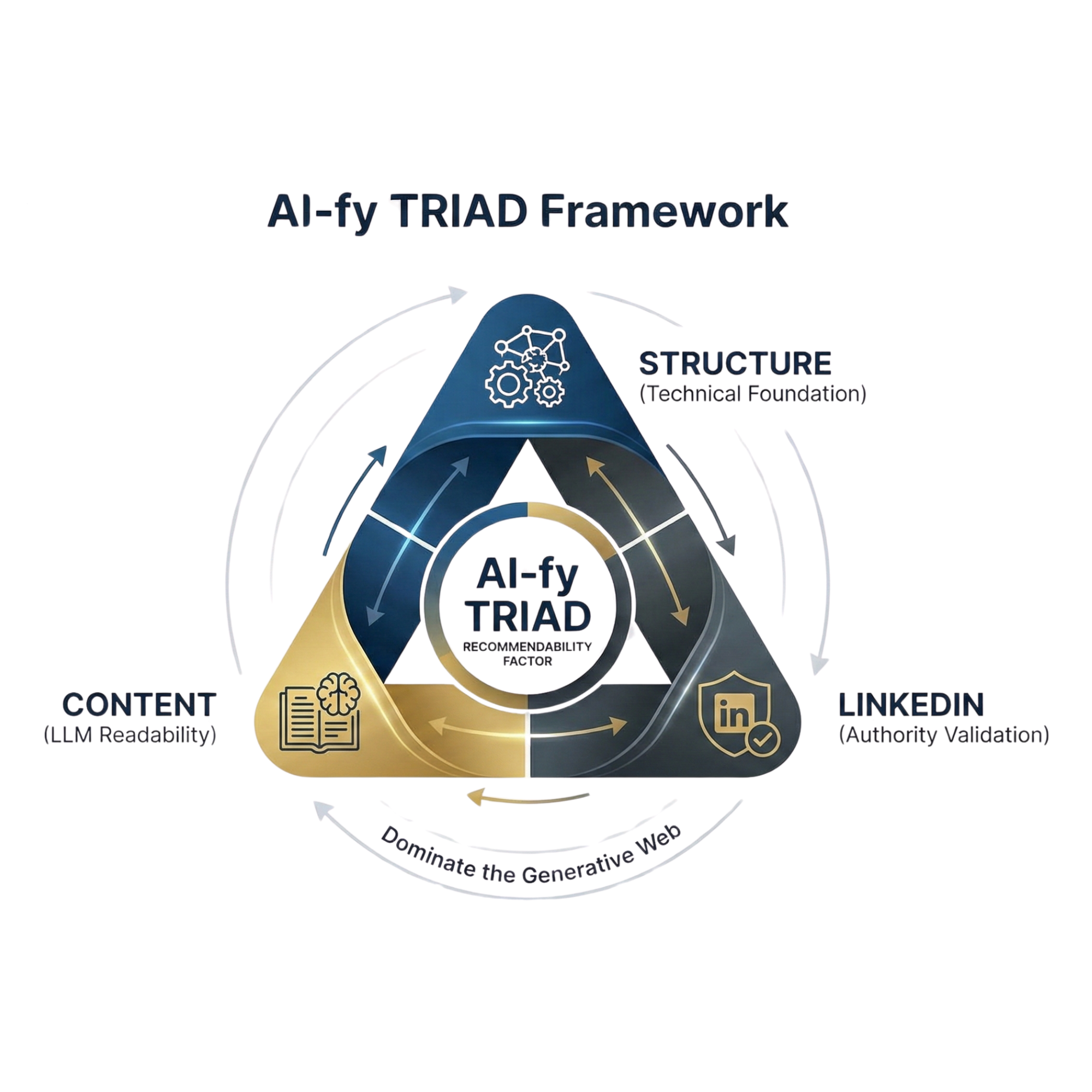

The AI-fy TRIAD Framework: Three Pillars of AI Visibility

Most LLMO guides list tactics without structure. They tell you to "fix your robots.txt" and "add schema markup" without explaining how these pieces connect or which to prioritize.

The AI-fy TRIAD Framework, developed by AI-fy.me, solves this by organizing every LLMO action into three interdependent pillars. Each pillar addresses a specific question that AI systems ask about your business, and each builds on the one before it.

Structure

Step 1: To Be Found

Technical foundation that lets AI crawlers access, read, and index your content.

Content

Step 2: To Be Cited

AI-readable content that positions you as the authoritative source in your domain.

Authority

Step 3: To Be Recommended

Schema markup + LinkedIn linking that creates a verified loop of trust AI can validate.

The framework is sequential by design. There is no point optimizing content if AI crawlers cannot access your site. There is no point building authority signals if your content is not formatted for AI extraction. Each pillar removes a specific barrier to AI visibility, and together they form a complete system.

Let us walk through each pillar in detail.

Pillar 1

Structure (To Be Found)

Before AI can recommend your business, it needs to be able to read your website. This sounds obvious, but the majority of business websites fail at this first step. They are technically invisible to AI.

Robots.txt: Your Site's Front Door for AI Bots

The robots.txt file controls which bots can crawl your website. Most websites were configured years ago for traditional search engine bots (Googlebot, Bingbot) and never updated for AI crawlers.

AI platforms use their own crawlers: GPTBot (OpenAI), ClaudeBot (Anthropic), PerplexityBot, CCBot (Common Crawl), Google-Extended (Gemini), and OAI-SearchBot. If your robots.txt does not explicitly allow these bots, they cannot read your content. One wrong line in this file creates complete invisibility to AI.

The fix takes five minutes. The impact can be visible within days.

yoursite.com/robots.txt right now. If you see User-agent: * followed by Disallow: /, you are blocking every AI crawler. If individual AI bots like GPTBot are not mentioned, they may be blocked by default depending on your platform's configuration.

Sitemap.xml: Your Table of Contents for AI

Your sitemap.xml tells AI systems where your most important content lives. Without it, AI crawlers navigate randomly and may never find your key pages.

An LLMO-optimized sitemap includes priority signals (which pages matter most), frequency signals (how often content is updated), and accurate lastmod dates. Pages with recent modification dates are more likely to be retrieved during RAG searches, as AI systems have a strong recency bias.

llms.txt: The Direct Line to AI

The llms.txt file is a newer convention, placed at your website root (yoursite.com/llms.txt), that provides a clean, markdown-formatted summary of your key content specifically for large language models. While robots.txt controls access and sitemap.xml maps structure, llms.txt tells AI directly: "Here is what matters most on this site."

A well-structured llms.txt includes your business name, a concise description, and links to your most important pages with brief context for each. An extended version (llms-full.txt) can provide additional depth.

Server-Side Rendering (SSR)

AI crawlers cannot reliably execute JavaScript. If your website renders content client-side (as many modern JavaScript frameworks do by default), AI crawlers may see an empty page. All critical content must be server-side rendered or delivered as static HTML to ensure AI crawlers can read it.

Page Speed and Crawl Efficiency

AI crawlers are resource-constrained and may abort slow-loading pages. Heavy JavaScript bundles, unoptimized images, and excessive third-party scripts all reduce the likelihood of a complete crawl. Keep your pages fast and lightweight.

Pillar 2

Content (To Be Cited)

Once AI can access your site (Pillar 1: Structure), the next barrier is whether it can extract useful answers from your content. Most business websites are written for humans browsing linearly. AI does not browse linearly. It scans, extracts, and synthesizes. Your content must be formatted for extraction.

Answer-First Paragraphs (The 40 to 60 Word Rule)

Under every heading on your key pages, the first paragraph should be a self-contained answer that directly addresses the question implied by the heading. This paragraph should be between 40 and 60 words, use a clear subject-verb-object structure, and require no additional context to be understood.

This is the paragraph AI is most likely to quote when recommending your business. Think of it as a featured snippet, but written for a conversational AI response rather than a search results page.

Conversational Query Headings

AI reads your H1 first. If it is vague or generic, AI moves on. Your H1 should be a clear statement of what you solve or what the page teaches.

H2 and H3 headings should be phrased as natural language questions that mirror how users prompt AI. Instead of "Our Services" or "Company Overview," use headings like "What does [Your Business] do?", "How does [Your Service] work?", or "Why should I choose [Your Business] over competitors?" These mirror the questions AI is trying to answer.

Comparison and Differentiation Content

AI systems prefer content that clearly distinguishes between alternatives. "X vs. Y" comparisons, feature tables, and explicit differentiation statements signal authority and specificity. When your content clearly articulates how your service or product differs from alternatives, AI has the structured context it needs to make informed recommendations.

Tables, Lists, and Structured Formatting

AI parses structured HTML (proper <table> tags, <ul>/<ol> lists, <details> elements) far more reliably than unformatted prose. Use tables for comparisons, numbered lists for processes, and FAQ sections for common questions. Avoid embedding data in images or PDFs that AI cannot read.

Entity Clarity

Use your full business name, founder name, and location consistently across every page. AI builds a knowledge graph from these entities. Inconsistencies (sometimes "AI-fy," sometimes "AI-fy.me," sometimes "AIFY") fragment your entity identity and reduce the AI's confidence in who you are.

Fact Density and Evidence

Content that includes original statistics, verifiable data points, and citations from credible sources is measurably more likely to be cited by AI. Pure marketing language with subjective claims ("We are the best in our industry") is actively deprioritized. Replace claims with evidence. Name specific numbers, reference specific studies, and cite specific sources.

Implementation

The Complete LLMO Implementation Checklist

| Pillar | Action | Impact | Effort |

|---|---|---|---|

| Structure | Allow GPTBot, ClaudeBot, PerplexityBot, CCBot, Google-Extended, OAI-SearchBot in robots.txt | Critical | Low |

| Create and submit a clean, dated sitemap.xml | High | Low | |

| Create llms.txt and llms-full.txt at website root | Medium | Low | |

| Verify all key content is server-side rendered (not JS-dependent) | Critical | Medium | |

| Optimize page speed (reduce JS bundles, compress images) | Medium | Medium | |

| Ensure HTTPS across all pages | High | Low | |

| Content | Write 40 to 60 word answer snippets under every H2/H3 | High | Medium |

| Convert headings to conversational questions | High | Low | |

| Add comparison tables for "X vs. Y" queries | High | Medium | |

| Implement FAQ sections on key pages | High | Low | |

| Ensure entity consistency (business name, founder, location) | High | Low | |

| Add verifiable statistics and source citations | High | Medium | |

| Add visible "Last Updated" timestamps on all content pages | Medium | Low | |

| Authority | Implement Organization + Person JSON-LD with sameAs | Critical | Medium |

| Add FAQPage, Article, HowTo schema where relevant | High | Medium | |

| Create verified Website ↔ LinkedIn trust loop | High | Low | |

| Register entity on Wikidata and Crunchbase | Medium | Medium | |

| Build mentions on Reddit, Quora, G2, and industry publications | High | High | |

| Add named author bios with credentials to all content | High | Low |

Measurement

How to Measure LLMO Success

LLMO introduces measurement challenges that traditional analytics tools were not built for. When AI recommends your business in a conversation, there is no guaranteed click, no trackable impression, and no ranking position. The recommendation happens inside a chat interface, and the user may act on it without ever visiting your website.

Effective LLMO measurement requires a new set of metrics:

| Metric | What It Measures | How to Track It |

|---|---|---|

| Citation Count | How often AI systems cite or link to your domain | Manual prompt testing, Semrush Enterprise AIO, Brand24 |

| Answer Share | Percentage of AI answers mentioning your brand vs. competitors | Prompt tracking matrix (20 to 30 queries across platforms) |

| Sentiment Analysis | Whether AI portrays your brand accurately and positively | Manual review of AI-generated mentions |

| AI Referral Traffic | Direct traffic from AI platforms to your site | Google Analytics 4 referral source tracking |

| Branded Search Lift | Increase in branded searches after AI exposure | Google Search Console branded query data |

| Entity Recognition | Whether AI recognizes your business when directly asked | Direct brand queries across ChatGPT, Gemini, Claude, Perplexity |

The Prompt Tracking Matrix

The most actionable LLMO measurement tool is a prompt tracking matrix. This involves defining 20 to 30 high-intent queries that your ideal customers might ask AI, then testing them regularly across ChatGPT, Gemini, Claude, and Perplexity.

Organize prompts into four categories: brand-direct queries ("Tell me about [Your Business]"), category queries ("Who are the best [your service] providers in [your market]?"), competitor queries ("Compare [Your Business] to [Competitor]"), and recommendation queries ("I need a [your service] specialist. Who should I hire?").

Track results monthly. Document whether your brand appears, the accuracy of the mention, the sentiment, and your position relative to competitors. Over time, this matrix becomes the most reliable indicator of your LLMO trajectory.

Avoid These

Common LLMO Mistakes (and How to Avoid Them)

| Mistake | Why It Happens | The Fix |

|---|---|---|

| Blocking AI crawlers | Robots.txt was configured for old bots and never updated | Audit robots.txt today. Add explicit allow rules for all 6 major AI crawlers |

| JS-dependent content | Modern frameworks default to client-side rendering | Enable SSR or pre-rendering for all key pages |

| Marketing fluff instead of facts | Website copy was written for branding, not for AI extraction | Replace subjective claims with verifiable data points and named sources |

| No schema markup | Schema felt optional or too technical to implement | Start with Organization + Person schemas and add sameAs attributes |

| Inconsistent entity naming | Different pages use different business name variations | Standardize to one exact business name across all digital properties |

| No llms.txt file | The convention is new and not yet widely adopted | Create a simple markdown file at your site root pointing to key content |

| Optimizing for one AI platform only | Focus on ChatGPT visibility while ignoring Gemini, Perplexity, Claude | Implement LLMO broadly across the technical stack, not platform-specifically |

| Ignoring LinkedIn as authority | LinkedIn seen as "just social media" instead of a knowledge graph source | Treat LinkedIn as your primary entity validation platform. Link it bidirectionally |

Timeline

LLMO Timeline: When to Expect Results

| Phase | Timeline | Focus Area | Expected Outcome |

|---|---|---|---|

| Quick Wins | 0 to 30 days | Robots.txt, sitemap.xml, llms.txt, basic schema | AI crawlers can access and read your site. You become findable. |

| Core Fixes | 30 to 90 days | Answer-first content, conversational headings, comparison tables, FAQ sections | AI starts extracting and citing your content in responses. |

| Authority Building | 90+ days | Full schema with sameAs, LinkedIn trust loop, Wikidata, third-party mentions | AI recommends your business by name with confidence. |

The important insight is that LLMO is not a one-time project. It is an ongoing optimization practice that compounds over time. As AI models update their training data and refine their retrieval processes, the businesses that have consistently maintained their LLMO signals will continue to gain visibility while others plateau or decline.

FAQ

Frequently Asked Questions About LLMO

What is LLMO (Large Language Model Optimization)?

LLMO stands for Large Language Model Optimization. It is the practice of structuring your business's digital presence so that AI systems like ChatGPT, Gemini, Claude, and Perplexity can find your content, understand your authority, and recommend you in their responses. Unlike traditional SEO, which focuses on search engine rankings, LLMO focuses on being the source AI cites when answering questions in your domain.

What is the difference between LLMO and SEO?

SEO optimizes for search engine rankings and click-through rates. LLMO optimizes for AI citation and recommendation across conversational AI platforms. They share technical foundations but differ in content strategy, measurement, and the discovery surfaces they target. LLMO builds on SEO rather than replacing it.

What is the AI-fy TRIAD Framework?

The AI-fy TRIAD Framework is a three-pillar methodology for LLMO developed by AI-fy.me. The pillars are Structure (to be found), Content (to be cited), and Authority (to be recommended). All three must work together for full AI visibility.

How is LLMO different from GEO and AEO?

AEO focuses on direct answer extraction for snippets and voice search. GEO focuses on citation in AI-generated summaries. LLMO is the broadest term, covering full machine-readability and entity authority across all AI surfaces. They overlap by roughly 80% in tactics but differ in scope and measurement.

Does LLMO replace SEO?

No. LLMO builds on SEO fundamentals. Strong site architecture, quality content, and domain authority still matter because AI systems rely on search engine indexes for live retrieval. LLMO adds an AI-specific optimization layer on top of SEO.

What is an llms.txt file and why does it matter?

An llms.txt file is a plain-text markdown file at your website root that provides a curated summary of your key content specifically for large language models. It guides AI directly to your highest-value pages, functioning as a table of contents designed for AI consumption.

How do I measure LLMO success?

Measure citation count, answer share across AI platforms, sentiment analysis of AI mentions, AI referral traffic via GA4, branded search lift, and entity recognition through direct brand queries. A prompt tracking matrix testing 20 to 30 queries monthly is the most actionable measurement tool.

How long does it take for LLMO optimizations to show results?

Technical fixes (robots.txt, sitemap) can show impact within days. Content optimizations typically surface in 2 to 8 weeks. Authority building through schema markup and knowledge graph integration is a 3 to 6 month process. The AI-fy TRIAD Framework structures these into quick wins, core fixes, and long-term authority phases.

Take Action

Next Steps: Your AI Visibility Roadmap

Here is the most efficient path forward, based on the hundreds of businesses AI-fy.me has analyzed:

Start with Structure. Check your robots.txt today. Verify your sitemap. Create an llms.txt file. These actions take less than an hour and immediately remove the most common barrier to AI visibility.

Audit your Content. Review your top five pages. Does each heading open with a clear, 40 to 60 word answer? Are your headings phrased as questions? Do you have at least one comparison table and one FAQ section? If not, these are your next content priorities.

Build your Authority. Implement Organization and Person schema with sameAs attributes. Link to your LinkedIn profile bidirectionally. Register on Wikidata. These are the signals that move you from "citable" to "recommendable."

Your customers are asking AI for recommendations right now. The businesses that invest in LLMO today will be the ones AI recommends tomorrow.

Find Out If AI Can See Your Business

Get a free AI Visibility Check and discover where your business stands in the eyes of ChatGPT, Gemini, and Perplexity.

Get Your Free AI Visibility Check© Copyright 2026. AI-fy.me. All rights reserved.