SEO vs. LLMO: The Future of Organic Visibility

SEO vs. LLMO:

The Future of Organic Visibility

By Andreas Höfelmeyer

Certified AI Search Architect & Senior Data Analyst

For twenty years, the rules of the digital road were clear. You built a fortress of authority, you optimized for keywords, and Google rewarded you with a blue link on Page 1. You didn't just play the game; you won it.

But recently, you may have noticed a disturbing silence.

While your firm’s reputation remains impeccable offline, your digital traffic is eroding. Worse, when you ask ChatGPT or Perplexity about the leaders in your specific niche, your name is missing. Instead, you see generic startups (companies with zero legacy but loud marketing) being cited as the "top solutions."

This isn't a failure of your quality. It is a failure of translation.

We are witnessing the most significant tectonic shift in information retrieval since the birth of the search engine. We are moving from Search Engine Optimization (SEO), where humans hunt for links, to Large Language Model Optimization (LLMO), where machines generate answers.

I have spent 20+ years in Data Analysis and Business Intelligence. I don't look at this shift as "magic." I view it as a data processing challenge. And right now, the data suggests that if you are not optimizing for the machine's memory, you are becoming invisible.

Here is the blueprint for regaining control of your ground truth.

What is the Difference Between SEO and LLMO?

SEO (Search Engine Optimization) focuses on ranking web pages in search results by matching keywords to user queries, driving traffic via links. In contrast, LLMO (Large Language Model Optimization) focuses on training AI models to understand your brand as a semantic "entity," ensuring you are cited directly in generated answers rather than just listed in search results.

The Shift from "Finder" to "Oracle"

For decades, Google acted as a librarian. You asked a question, and it pointed you to a shelf (a list of links) where you could find the answer yourself. SEO was the art of getting your book on the eye-level shelf.

Generative AI (ChatGPT, Gemini, Claude) acts differently. It is not a librarian; it is an oracle. It reads the books, synthesizes the information, and gives you the answer directly.

If your firm’s "book" is written in a language the AI cannot parse, unstructured data, blocked robots.txt files, or vague semantic connections, the Oracle ignores you. It doesn't matter if you've been in business for 30 years. To the Large Language Model (LLM), you do not exist.

This is why "The Invisible Authority" is the most dangerous position to be in right now. You have the expertise, but the AI startups have the structure.

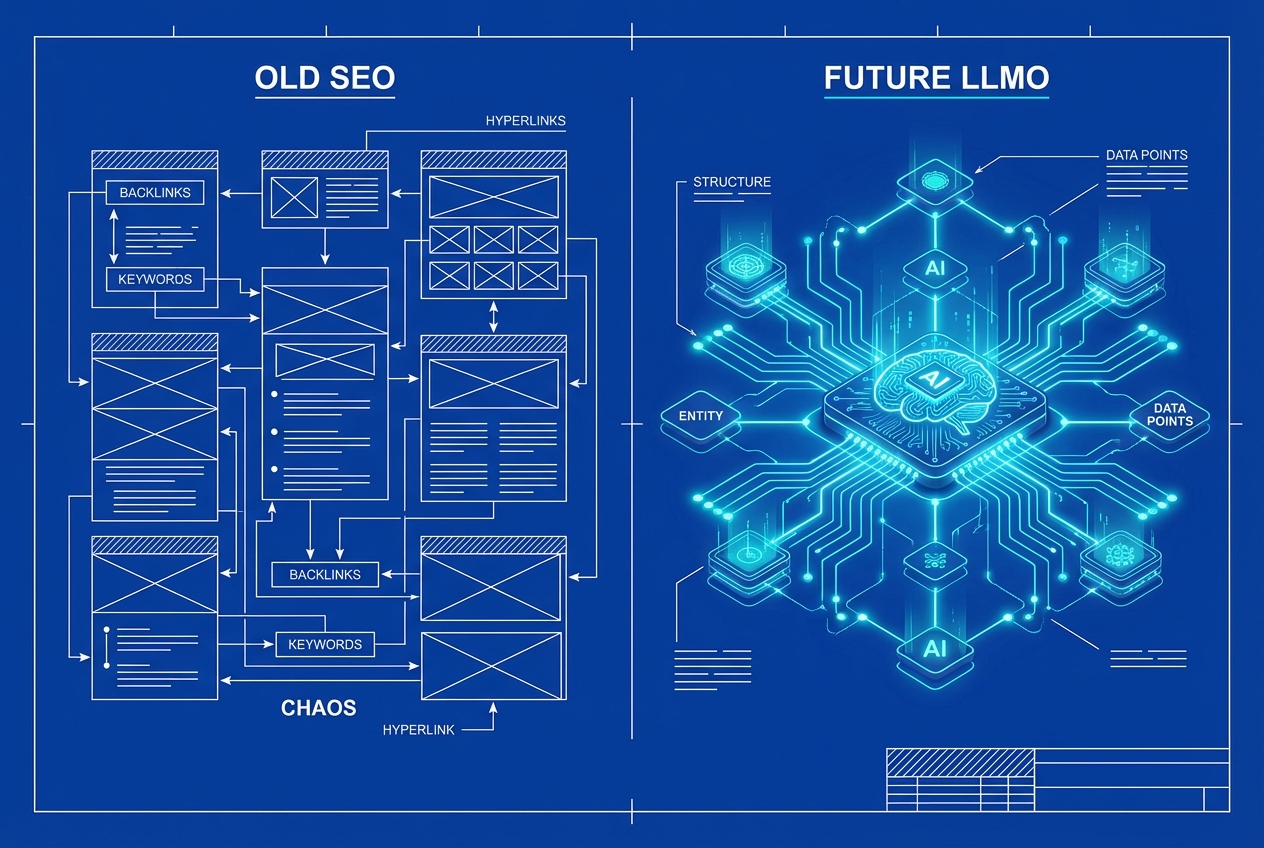

The Comparison: The Old Guard vs. The New Code

To visualize this shift, look at the architectural differences between the two disciplines:

Why Is My Established Authority Invisible to ChatGPT?

Established firms often fail in AI visibility because LLMs prioritize "machine-readable" structure over human reputation. If your site blocks AI crawlers via robots.txt or lacks Knowledge Graph Schema, the model cannot "learn" your expertise. Visibility requires translating your legacy authority into structured data governance that training algorithms can ingest.

The "Black Box" Fallacy

Many of the founders I work with, Legacy Owners protecting a fortress, believe that AI is simply "reading the internet." They assume that because their website is live, ChatGPT knows who they are.

This is a dangerous assumption.

LLMs do not "read" in real-time like a human. They are trained on datasets. If your digital presence prevents that training, you are absent from the model's "worldview."

In my analysis, I frequently encounter three "silent killers" of legacy firms:

The Wall: Your

robots.txtfile is outdated, blocking the very crawlers (GPTBot, CCBot) that are trying to learn about you.The Fog: Your content uses jargon and corporate fluff. AI thrives on "Information Gain"—dense, unique facts. If you sound like everyone else, the model compresses you into the background noise.

The Disconnect: You lack structured data (Schema Markup). You haven't explicitly told the machine, "Andreas Höfelmeyer IS A Certified AI Search Architect" in code. You've left it to guess. And AI hates guessing.

I don't guess at algorithms; I analyze the data structure. The fix isn't more blog posts; it's better governance.

How Do We Move from Keywords to Entities?

Optimizing for "Entities" means shifting focus from specific search phrases (keywords) to defining things (people, places, concepts) and their relationships. By using Schema Markup to link your brand to specific industry topics in the Knowledge Graph, you teach the AI model to associate your firm with those concepts as a factual authority.

The Knowledge Graph: The Brain of the Beast

In SEO, we chased "keywords." If you wanted to rank for "Data Analysis," you repeated that phrase.

In LLMO, we build "Entities."

An entity is a distinct object or concept that the AI understands. "Andreas Höfelmeyer" is an entity. "Data Analysis" is an entity. "AI-fy.me" is an entity.

Our job as "Strategic Architects" is to build bridges between these entities. We want the AI to calculate a high probability that when a user asks about "Strategic AI Implementation," the entity "Andreas Höfelmeyer" is the most logical connection.

The "LLMO Guardian" Approach

At AI-fy.me, we use a tool called the "LLMO Guardian" to enforce this structure. We don't just write text; we engineer the backend:

Schema Construction: We link your website to your LinkedIn and your crunchbase profile, creating a "Triangle of Truth".

The Answer Snippet: We ensure every core page has a 40-60 word definition (just like the ones in this blog post) effectively spoon-feeding the AI the exact summary we want it to quote.

This is how you translate offline reputation into online code.

What Is the "Ladder of Trust" for AI?

The "Ladder of Trust" is a strategic framework for building AI authority. It begins with Technical Availability (allowing bots to crawl), moves to Semantic Clarity (using Schema to define relationships), and culminates in Digital Authority (high-quality citations and consistent data across the web). Each rung ensures the AI trusts your data enough to recommend it.

Step 1: Awareness (Let them In)

You cannot be recommended if you lock the door. We must update your web standards to explicitly allow GPTBot (OpenAI), ClaudeBot (Anthropic), and PerplexityBot.

This signals to the AI companies: "We are open for business; come learn from us."

Step 2: The Bridge (Structure the Data)

This is where we implement the "Diagnosis". We audit your digital footprint to ensure your Name, Address, Phone, and - crucially - your Service Definitions are identical across the web. Any discrepancy reduces the model's confidence score (or "trust") in your entity.

Step 3: The Transformation (Own the Narrative)

Finally, we create content that combines "The Architect" (structure) with "The Human" (stories). AI can generate facts, but it cannot generate your experience. By weaving your personal 20-year history into the technical content, we create "un-copyable" data points that the AI must cite to be accurate.

How Do I Future-Proof My Firm's Digital Legacy?

Future-proofing a legacy firm requires adopting a "Solution Company" mindset. This involves moving away from generic marketing hacks and toward strict data governance. By owning your platform (using low-code tools) and ensuring your "Ground Truth" data is pristine, you protect your reputation against algorithmic volatility and AI hallucinations.

The "Do Not Do" List

As you navigate this shift, you will be tempted by "Hype Bros" promising overnight rankings. Ignore them.

NO Heavy Dev: You do not need a custom-coded $50k website. We use low-code tools embedded in GoHighLevel to keep you agile.

NO Generic AI: Do not let a junior employee copy-paste from ChatGPT to your blog. That is "model cannibalism", feeding the AI its own output. It degrades your authority.

NO "Hype" Marketing: We do not promise magic. We promise engineering.

The Conclusion: Control Your Ground Truth

I built my career on 20+ years of Data Analysis. I have seen technologies rise and fall. But the principle of Garbage In, Garbage Out never changes.

If you feed the global AI models garbage (or nothing at all), you will get garbage results. But if you architect your presence with precision, transparency, and humanity, you will not just be found, you will be recommended.

You are not building a startup; you are protecting a fortress.

It is time to reinforce the walls with code.